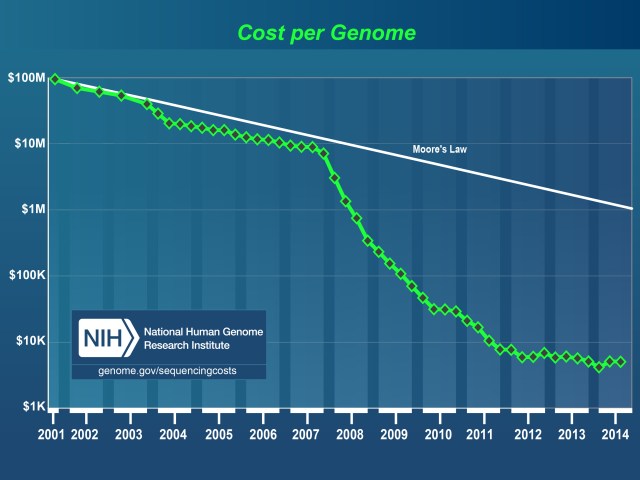

I recently heard this thing referred to as ”the most overused slide in genomics” (David Klevebring). It might be: what it shows is some estimate of the cost of sequencing a human genome over time, and how it plummets around 2008. Before that, the curve is Sanger sequencing, and then the costs show second generation sequencing (454, Illumina and SOLiD).

The source is the US National Human Genome Research Institute, and they’ve put some thought into how to estimate costs so that machines, reagents, analysis and people to do the work are included and that the different platforms are somewhat comparable. One must first point out that downstream analysis to make any sense of the data (assembly and variant calling) isn’t included. But the most important thing that this graph hides, even if the estimates of the cost would be perfect, is that to ”sequence a genome” means something completely different in 2001 and 2015. (Well, with third generation sequencers that give long reads coming up, the old meaning might come back.)

For data since January 2008 (representing data generated using ‘second-generation’ sequencing platforms), the ”Cost per Genome” graph reflects projects involving the ‘re-sequencing’ of the human genome, where an available reference human genome sequence is available to serve as a backbone for downstream data analyses.

The human genome project was of course about sequencing and assembling the genome into high quality sequences. Very few of the millions of human genomes resequenced since are anywhere close. As people in the sequencing loop know, resequencing with short reads doesn’t give you a genome sequence (and neither does trying to assemble a messy eukaryote genome with short reads only). It gives you a list of variants compared to the reference sequence. The usual short read business has no way of detect anything but single nucleotide variants and small indels. (And the latter depends … Also, you can detect copy number variants, but large scale structural variants are mostly off the table.) Of course, you can use these edits to reconstruct a consensus sequence from the reference, but it would be a total lie.

Again, none of this is news for people who deal with sequencing, and I’m not knocking second-generation sequencing. It’s very useful and has made a lot of new things possible. It’s just something I think about every time I see that slide.